April's Hidden AI Gem

I have been seeing an abundance of articles about how Anthropic’s Mythos is going to end the world, but another model released in April currently has me over the moon. Google announced Gemma 4 on April 2, 2026. Why does this matter to you? Whether you’re tinkering in your garage, building a startup, or running an enterprise, it’s a total game changer.

What is Gemma 4?

Gemma 4 is a family of open models released by Google DeepMind, including a mixture of mini, mixture-of-experts (MOE), and dense models. It ranks #3 in open model performance, outperforming significantly larger models.

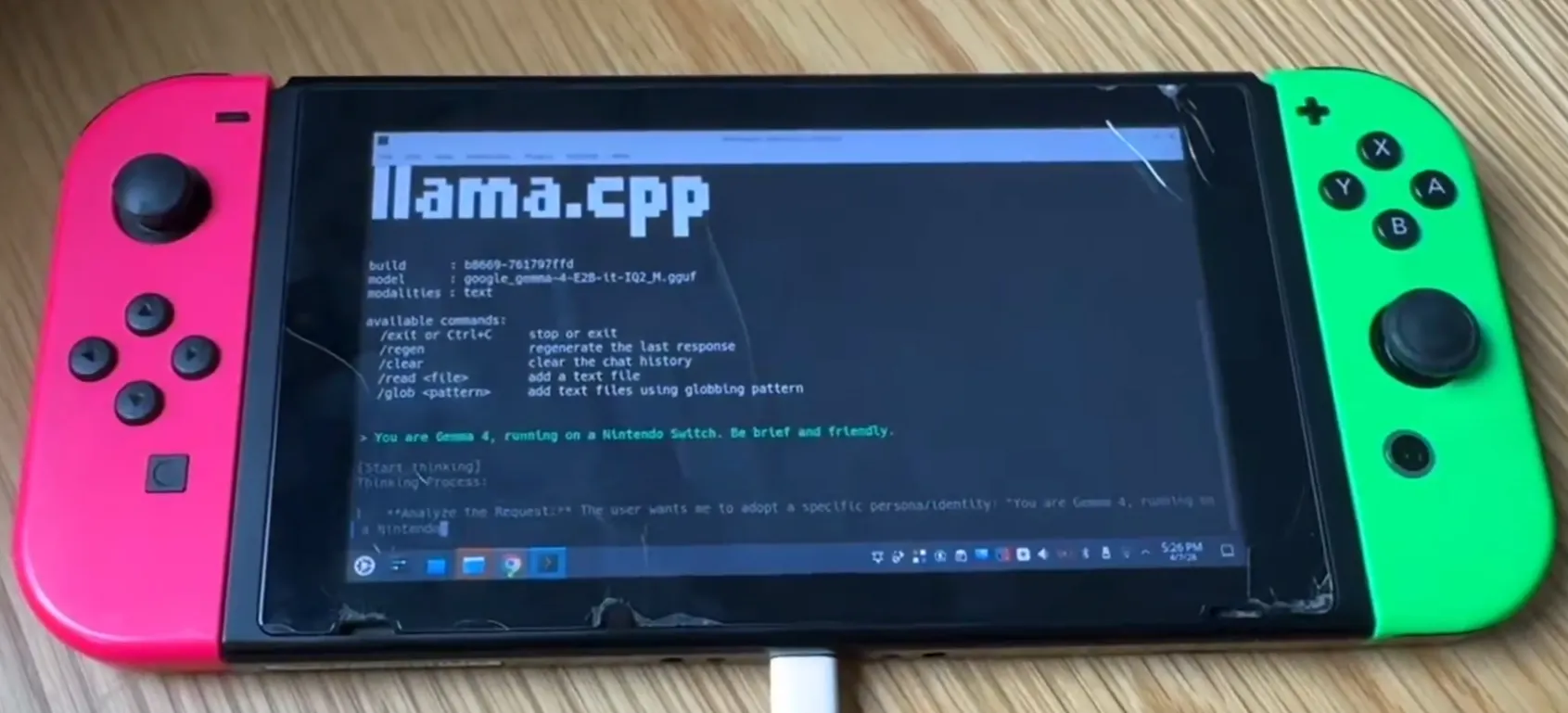

They support text and image inputs, with 2 of the models also supporting audio input. There are a wide range of model sizes to support various types of consumer hardware. A developer was even able to get it running on a Nintendo Switch! Yes, the console known for having limited framerates and lower quality textures is able to run these.

The model family spans from the efficient 2B and 4B models, which are small enough to fit on a phone or old laptop, all the way up to the 26B MOE and 31B dense models for more demanding tasks. The 26B MOE is a powerful compromise due to only firing up the parts that it needs for any particular query, which lets it outperform much larger models.

For the hobbyist

One of the most difficult aspects of using generative AI for personal use is managing costs and tokens. Now, with fairly modest hardware, you can run either the 4B effective or 26B MOE model locally. In a time where providers such as Anthropic are locking you out of third-party tools like OpenClaw, this is a total gamechanger. Set up your favorite tool like n8n or OpenClaw on your machine, connect it to Gemma 4, and do anything from automatically responding to emails to having it architect entire applications.

For the entrepreneur

Two things most certainly have occurred with any small business trying to use AI tools: they have either run out of tokens or been hit with an unexpectedly large bill. Gemma 4 solves both of these. For those just starting out, run the largest model that efficiently runs on your computer. If it can run on a switch, it can run on whatever toaster of a PC you have. As your business scales, you can install a dedicated computer to run larger models and multi-agent setups. You can even create a whole team of virtual employees without being beholden to the latest usage restrictions from OpenAI and Anthropic. Additionally, Gemma 4 is published under an Apache 2.0 license, which means you can build products on top of it and use it commercially.

For the enterprise

Everything that applies to the entrepreneur also applies to the enterprise, but there is an additional constraint often found with large customers, security. Large companies, particularly in finance, healthcare, and government often require that models are run completely on their own infrastructure with no external API calls. Deploy Gemma 4 on your own servers and you no longer have to worry about exposing your data to be used for training the next flagship model. And don’t be duped into thinking that performance is bad just because it’s open source. While it’s not as powerful as the ChatGPT 5.5 and Opus 4.7, it is comparable with the previous generation’s much larger models. You didn’t have a problem building on GPT-5.5 or Opus-4.6 a few months ago, so why wouldn’t you be ok with a similarly performing model today?

Open AI, for everyone

No matter where you are in your AI journey, Gemma 4 has something great to offer. Whether you need a personal assistant or are looking to create your own fine-tuned small language model, Gemma 4 is a great candidate. And the best part is, the model weights are open and it’s completely free. In a time where so much technology is subscription based, tools like Gemma 4 are precious.